http://www.hifi-writer.com/he/vhstobd/index.htm I was looking around for VHS/DVD comparisons and I found this page. I'm thinking what the hell is wrong with this guys VHS tapes? I can swear 90% of the video tapes I used to have didn't look like that. It looks like the movies that guy had were recorded off an antennae 20 years ago in Extended Super Long Play, left in a dusty addict without the box, been screwed around with by ADHD kids repeatedly hitting the fast forward and rewind buttons on a 30 year old VHS player, hooked up with RF cables that are hanging over an air conditioner to a screen capture device that just doesn't work good for older analog machines.

Why didn't they have multiple full sized images to compare them, like as in 1080p? that would have been a much better comparison than zooming up x30 on some minute detail. Anyway though, it probably does look about that bad, but when I'm watching a movie, I don't have my face pressed up against the TV screen.

psycopathicteen:

Perhaps you're looking through nostalgic rose-colored glasses at your memories of the era when VHS was all we had, and you didn't notice the artifacts. VHS has only enough chroma (color) bandwidth for 40 chroma samples per scanline. This means any feature narrower than 7 NES pixels will have its colors blurred. It's the analog counterpart to the MSX attribute clash seen in

this conversion.

VHS is really that bad.

Wikipedia says "VHS tapes have approximately 3 MHz of video bandwidth and 400 kHz of chroma bandwidth. [...] In modern-day digital terminology, NTSC VHS is roughly equivalent to 333×480 pixels luma and 40×480 chroma resolutions".

Here's his screen shot from ID4,

just filtered to the above-mentioned constraints, cropped to the same as what he demonstrates:

Attachment:

vhsquality.jpg [ 23.32 KiB | Viewed 2367 times ]

vhsquality.jpg [ 23.32 KiB | Viewed 2367 times ]

. This particular example doesn't even really show the abysmal chroma resolution, although the red lights in the scene would.

... that said, his comparing his badly deinterlaced versions is really quite unfair; you wouldn't see this awful weave deinterlacing on a CRT SDTV.

psycopathicteen wrote:

I can swear 90% of the video tapes I used to have didn't look like that.

You have to consider that nearly all the VHS captures in that page are zoomed in, and you're viewing them on an HD monitor. At the normal size, viewed on an SD CRT TV, it would probably look more like what you remember. Aside from that, yeah, I guess VHS did look that bad.

My biggest complaints about VHS are not about the quality of the video though (the SD CRT TVs of the time did a good job of hiding the flaws of decent SP recordings), but about how flimsy the media is. Quality would degrade with each use, VCRs would often chew up the tapes, completely ruining parts of the video, and if you kept the tapes stored for a long time they'd often get moldy. VHS is just a terrible storage technology.

It might have to do with the fact that TV's have 60 fps so any noise would be less noticeable.

What is exactly wrong with the deinterlacing? I know that there can be time based errors, so do CRTs do a better job at adjusting to uneven scanlines than modern equipment?

psycopathicteen wrote:

What is exactly wrong with the deinterlacing? I know that there can be time based errors, so do CRTs do a better job at adjusting to uneven scanlines than modern equipment?

If you looked at 480i content on a CRT SDTV, you'd never even see the interlacing—there

is no deinterlacing. The scanlines go in the right place, you get ≈450 different visible vertical locations on screen where things can be, and (thanks to the flicker fusion threshold, the phi phenomenon, and beta movement) your brain stitches together the perceived pictures. But only 240 scanlines happen in each 1/60th of a second.

"But aren't there 480 scanlines of data? Where did the other data go?" It was thrown away when it was converted to NTSC in the first place. It is gone. You can guess what it was (by interpolating vertically, a "bob" deinterlacer; by assuming there's no motion and "weave"ing the fields together, or by doing some

really clever things and estimating motion like mplayer's mcdeint does)

By using a weaving deinterlacer, he's blending content from other frames into the current one, so of course it looks bad, every bit as much as if you motion blurred two or three frames of the HD video. Even just doublescanning("bob") would be less misleading, given the number of words he spends on complaining about the bad deinterlacing. Every time that he complains about (de-)interlacing, comb lines, or fields at all (such as the motorcycle) it's nothing more than lots of (virtual) ink spilled saying that whatever he used to deinterlace the content did a bad job of it—not an intrinsic flaw of VHS.

It'd be nice if he'd been able to compare, say, VHS to (Laserdisc or DVD over composite), both via his capture card. It'd actually let you decouple the effects of NTSC and deinterlacing from the actual constraints of VHS.

My reaction is more "is DVD really that bad?!", and this is from somebody who watched DVDs in a PC.

He also said that he digitally compressed the VHS tapes using MPEG.

Yeah but VHS doesn't look that far from it still.

Something to take into account is that it's likely a lot of the issues get hidden in motion. Comparing still images is a bad idea when you consider that.

I suppose I could start a thread "Is the NES's video really that bad?"

On a flat-screen display, the stock composite signal is really that bad, if it displays at all.

On a CRT, I would argue that it isn't so bad. Dot crawl is a lot harder to notice when you are sitting six feet from the screen. A Famicom AV will show no jailbars with real cartridges. The jagged lines are not as prominent as they are in screen or video captures. The only way to experience the native composite signal justly is to look at a TV screen displaying it.

For a VHS tape, they look much nicer on a tube TV. I find this especially so for stuff shot on video camera, like old-school Doctor Who. A DVD will look better, but the VHS won't look bad.

Great Hierophant wrote:

For a VHS tape, they look much nicer on a tube TV.

Of course. The format was designed to be good enough for the quality bandwidth of its contemporary CRT TVs. If it had been any better than that, it would have been wasteful. On that kind of TV a VHS and a blu-ray would look just as good.

Great Hierophant wrote:

On a CRT, I would argue that [NES video] isn't so bad. Dot crawl is a lot harder to notice when you are sitting six feet from the screen. A Famicom AV will show no jailbars with real cartridges. The jagged lines are not as prominent as they are in screen or video captures.

The jagged lines were so prominent in

Super Mario Bros. that they caused the

Mario Paint player's guide to have wrong shapes for the bricks. This is the same effect as the stripes in the

Solstice and

Dr. Mario title screens.

rainwarrior wrote:

On that kind of TV a VHS and a blu-ray would look just as good.

Having acquired a Blu-ray player a couple of years before an HDTV, I can say that DVD and Blu-ray look exactly the same on a CRT SDTV, except maybe for the occasional high-motion scene that might result in visible compression artifacts in DVDs but not in Blu-rays. I'm absolute certain VHS looks worse, though (even though I haven't used a VCR in over a decade!)... It's noticeably blurrier and shaky.

tokumaru wrote:

I'm absolute certain VHS looks worse, though (even though I haven't used a VCR in over a decade!)... It's noticeably blurrier and shaky.

I think a good quality VHS would look the same, more or less? They tend to degrade a little every time you watch them, though. Most VHS tapes have seen a lot of use and are far from peak quality.

Does anyone remember laserdisc? Basically a non-degrading equivalent to VHS, served up on a giant platter.

Laserdiscs are awesome but they degrade as well. Not from use, just from time/exposure. Quality control on laserdisc manufacturing was pretty poor. The disc halves end up separating enough to allow oxygen in and the reflective layer oxidizes. Laserdisc uses an analog format for video so you end up with artifacts onscreen.

Also the fact that they can get damaged much more easily and unlike CDs they don't even have any means to correct for errors caused from that.

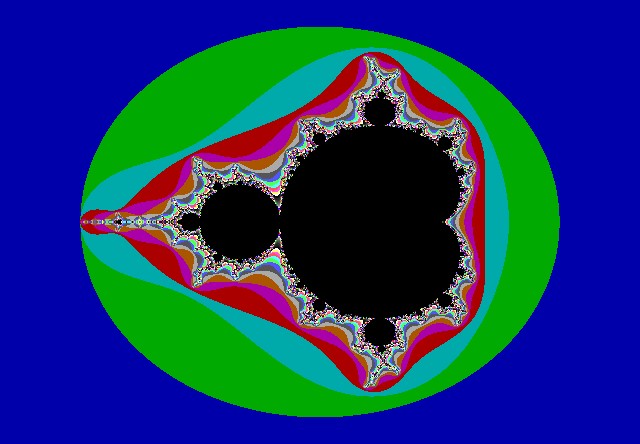

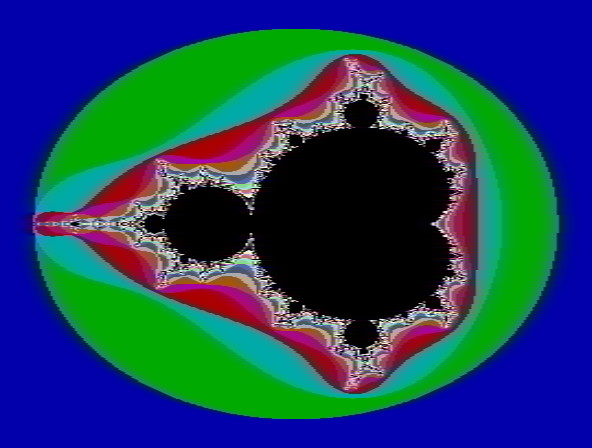

Mandelbrot fractal (generated by FRACTINT), with JPEG artifacts standing in for MPEG2 artifacts:

Attachment:

jpeg-artifacts.jpg [ 49.04 KiB | Viewed 1491 times ]

jpeg-artifacts.jpg [ 49.04 KiB | Viewed 1491 times ]

Same mandelbrot fractal, filtered to VHS constraints:

Attachment:

vhsbandwidth.jpg [ 45.06 KiB | Viewed 1491 times ]

vhsbandwidth.jpg [ 45.06 KiB | Viewed 1491 times ]

Especially note the right side of the fractal.

lidnariq wrote:

Mandelbrot fractal

Yes, the difference is quite obvious when these are seen through today's movitors/TVs, but not nearly as obvious on TVs from when VHS was the dominant home video technology.

Sure. I specifically chose the mandelbrot with a garish palette specifically because it would show both VHS and DVD encodings in the worst possible light, as they are designed for real-world video.

None-the-less, the point is that the differences and errors are representative of the actual signal quality degradation caused by the corresponding encoding.

A more representative sample of what VHS felt/looked like can be seen e.g.

here.

On a CRT, you have the advantage of low persistence so offsets between fields of video don't look as bad. Also CRTs were built with PLLs to allow for wide variations in signal timing while most video capture devices sample on a rigid 3.58 mhz *4 pixel clock and have limited room to adjust that. VHS captures look a lot better with a time base corrector but apparently this guy doesn't have one.

Laserdiscs do have about the same bandwidth as broadcast NTSC video (twice the chroma bandwidth of VHS and less noise in the color), but since there's no error correction at all, any problems with the disc surface will lead to visible issues. Usually this is line dropouts or rainbow speckles ("laser rot"). Also on a lot of cheaper players, the analog audio carrier is not notched out completely leaving a bit of texture on the image.

Besides that, a lot of early laserdiscs were not made from quality film sources so you get the dust and scratches and optical flaws that were on the original scanned film print.

Sik wrote:

Also the fact that they can get damaged much more easily and unlike CDs they don't even have any means to correct for errors caused from that.

That's partially true.

Most newer (and by that I mean later 80's) discs started having digital audio encoding (same as CD's), so at least there's that. And the means to correct for video errors was to make sure to have at least 3 frames of video for still slides. Heh. practical I know.

The worst Laserdiscs are the ones that look like they were literally mastered from VHS tape . Worst of both worlds, that muddy-dim picture, interference, and weak audio encoded on the disc... forever!

It's fun when you're using a smarter kind of laserdisc player, and it interprets a patch of scratches and scuffs as encoded commands... It's only happened a few times in hundreds of hours of viewing, but it results in a random, immediate jump to some other part of the film (kind of like a record needle jumping).

Heh, I was unaware that there were disc rot problems with Laserdisc. I only know one person who had a collection, and he took good care of 'em, but of course nowadays he's replaced everything in it with DVD/BR, so I have no idea how his collection held up long-term. I mostly just enjoyed them for the big heavy discs and a sense of superiority over those rattly plastic VHS cassettes.

I love this video that deliberately compounds the signal loss from making a VHS copy over and over until the video completely degrades:

https://www.youtube.com/watch?v=mES3CHEnVyIIt sort of magnifies any inherent problems in VHS by turning them up as strong as they can go. Colour goes long before intensity, and it seems like both colour and intensity suffer from horizontal blurring a lot faster than noise. Audio degradation seems to be more dominated by noise, but maybe that's because audio is lower frequency information.

I'm trying to find the exact specifications on VHS frequencies, and I keep finding conflicting information. Some sources say the FM luma carrier is from 3.4 Mhz to 4.4 Mhz, other sources say it's from 3.8 to 4.8 Mhz.

tokumaru wrote:

rainwarrior wrote:

On that kind of TV a VHS and a blu-ray would look just as good.

Having acquired a Blu-ray player a couple of years before an HDTV, I can say that DVD and Blu-ray look exactly the same on a CRT SDTV.

Probably depends on how you had it wired up. I gutted one of those Wii VGA cables to get at the component to RGB transcoder so I could build an adapter so I could plug my Blu-ray player into my old 28" widescreen CRT.

First of all, a HD image resampled down to SD resolution in RGB should have a greater colour resolution than DVD. I may have the terminology wrong, but I can say that, on the same SD screen, the Terminator 2 blu looks miles better than the PAL DVD.

Secondly, DVD resolution is 4:3, widescreen content is compressed in width to fit when recorded and then stretched to fill your TV screen when played back. Depending on the player, it

might outputting a native 16:9 image when it downscales to SD, but I have no way of verifying this.

Thirdly, in most cases (at least to my eyes) the HD compression artefacts get smoothed away in the down scaling, leaving a near crystal clear SD image.

In the end, it's all down to your connections, equipment and eyes. If you are using a PAL or NTSC based connection then you might as well stick with the DVD

On the subject of VHS tapes, where I work we offer a simple VHS to DVD transfer service. I've seen badly recorded tapes and competitively good looking tapes. Both look equally bad once they've been through a DVD recorder's encoder.

Hojo_Norem wrote:

First of all, a HD image resampled down to SD resolution in RGB should have a greater colour resolution than DVD.

This is true.

Hojo_Norem wrote:

I may have the terminology wrong, but I can say that, on the same SD screen, the Terminator 2 blu looks miles better than the PAL DVD.

Using PAL already makes this comparison quite difficult to do correctly (the movie is almost guaranteed to be 24 FPS, which doesn't convert nicely to PAL), but even if you've taken that into account, resolution may not be the only difference between DVD and Blu-ray.

Hojo_Norem wrote:

Secondly, DVD resolution is 4:3, widescreen content is compressed in width to fit when recorded and then stretched to fill your TV screen when played back.

DVD resolution is typically 720x480 (NTSC) or 720x576 (PAL), and the same resolution is used for both 4:3 and 16:9 content. The player must stretch the image to one of those two ratios in order to display it correctly.

Hojo_Norem wrote:

Depending on the player, it might outputting a native 19:9 image when it downscales to SD, but I have no way of verifying this.

NTSC has no way to display a native 16:9 picture; all NTSC DVD players must either reduce the vertical resolution or crop some of the picture from the sides. (I think I read somewhere that PAL is capable of signalling a widescreen picture, but I don't know anything about this because PAL equipment is uncommon in North America.)

Hojo_Norem wrote:

Thirdly, in most cases (at least to my eyes) the HD compression artefacts get smoothed away in the down scaling, leaving a near crystal clear SD image.

This is true, unless the artifacts are quite extreme.

Hojo_Norem wrote:

On the subject of VHS tapes, where I work we offer a simple VHS to DVD transfer service. I've seen badly recorded tapes and competitively good looking tapes. Both look equally bad once they've been through a DVD recorder's encoder.

You can get much better results by doing the encoding and mastering on a PC.

Neither NTSC nor PAL can signal that their content is anamorphic 16:9 in-band, however, almost all PAL markets use SCART connectors. With the advent of 16:9 TVs, SCART magically grew trinary signaling to indicate that content was 16:9.I'm wrong! There

is an in-band 16:9 signal-

https://en.wikipedia.org/wiki/Widescreen_signaling

I use both VHS and DVD. VHS isn't so bad in SP mode. Many VCRs these days cannot record in LP mode though, so you have to use EP mode, or waste a DVD even if you don't need to keep the recording! I have used them in the past month actually, both recording and playback. In the past few months I have recorded Slugterra on VHS in EP mode. I once noticed some text on the screen for perhaps one frame or a few frames during what was probably supposed to be a commercial break, and I could not read it even though trying to pause the tape. How much do you think it is change depending on animated show, live action, etc?

zzo38 wrote:

In the past few months I have recorded Slugterra on VHS in EP mode. I once noticed some text on the screen for perhaps one frame or a few frames during what was probably supposed to be a commercial break, and I could not read it even though trying to pause the tape. How much do you think it is change depending on animated show, live action, etc?

I think that's more the result of commercial producers making fine print smaller than SDTV is capable of showing.

tepples wrote:

I think that's more the result of commercial producers making fine print smaller than SDTV is capable of showing.

O, OK. I didn't know that.

Yeah, ad guys see 1080i and say "1080-I gotta get me some of this! Now that I have even bigger space for disclaimers, I can make more outrageous claims." Then the commercial is shrunk down to 640x360 and blurred vertically so that it doesn't flicker horribly when interlaced, and all the text is too small to make out.

Just wait until 4K broadcasting becomes the big thing. Oh boy...

1080i has always seemed the worst idea to me. I really don't understand why we needed an (awful) interlaced mode in HD when we already had 720p if you couldn't handle the bandwidth.

rainwarrior wrote:

1080i has always seemed the worst idea to me. I really don't understand why we needed an (awful) interlaced mode in HD when we already had 720p if you couldn't handle the bandwidth.

The first consumer HDTVs were CRT HDTVs, and I guess 1080i was a cheaper upgrade to the tube than 720p.

Interlacing was always a design decision for CRTs, since deinterlacing isn't necessary when the TV just does the right thing in the first place. If LCDs were already predominant at the time we'd have chosen to send 1080p30 instead.

1080i is substantially higher resolution than 720p, and even with deinterlacing artifacts I can see the improvement.

If you want something to actually complain about in the ATSC standard, it's the required overscan.

Overscan shouldn't exist... waste of bandwidth among other things.

Customers buying the most expensive TVs would counterargue that if overscan didn't exist, the money spent on the edges of their TV tubes would go to waste.

Besides, early TVs were completely circular, then later ellipsoid with the top & bottom of the image pinched, etc...

Later, overscan got put to good use by carrying digital information, Teletext, telesoftware, etc.

Blanking areas are something else entirely...

Overscan in NTSC? Fine. Early CRTs just weren't precise enough.

Required overscan in ATSC? Abominable. Taking a 720p input stream, scaling it up by 110%, and then displaying the center upscaled 720 lines? WTF were they thinking?

On top of that good chunk of TVs I see sold here still crop out the edges of the image... so you lose even more image when shit like that is done... On some you can fix up that in the service menu, but just some.

lidnariq wrote:

Overscan in NTSC? Fine. Early CRTs just weren't precise enough.

Required overscan in ATSC? Abominable.

The first ATSC sets were CRTs, and they still weren't precise enough to go all the way out to the edges.

lidnariq wrote:

Interlacing was always a design decision for CRTs, since deinterlacing isn't necessary when the TV just does the right thing in the first place. If LCDs were already predominant at the time we'd have chosen to send 1080p30 instead.

1080i is substantially higher resolution than 720p, and even with deinterlacing artifacts I can see the improvement.

If you want something to actually complain about in the ATSC standard, it's the required overscan.

So, it looks like the first round of HD CRTs supported 480i and 1080i commonly. I guess that makes some sense that it was easiest to implement if they were both interlaced?

1080i is only substantially higher resolution at 30 hz. At 60 hz it's 921600 vs 1036800 pixels per frame, not as big a difference.

If 1080i kind of hung on as a bridge for devices that also supported traditional 480i, maybe overscan came with that for a similar reason. i.e. the 480i mode is going to do it, easiest if the HD modes work the same? I dunno.

I've never even seen an HD CRT in real life. I didn't even realize they existed until tepples started referring to them. All the HD TVs I've ever dealt with were LCD or Plasma. For those the overscan seems entirely stupid, and interlacing sucks unless your content is <= 30 fps (and at any rate, interlacing looks worse on an LCD than a CRT). Mostly I'm just annoyed that my PS3 defaults to "1080i" as a "superior" supported resolution on my 720p TV, when I'd never want to use 1080i at all. (Gotta disable 1080i every time my PS3 video settings reset.)

NTSC/480i: H=16kHz, V=60Hz

EDTV/VGA/480p: H=32kHz, V=60Hz

1080i: H=34kHz, V=60Hz

720p: H=54kHz, V=60Hz

1080p: H=67kHz, V=60Hz

In a CRT with a magnetically-deflected tube, the greater the range of horizontal deflection rates it can support, the more expensive. (More expensive than just the additional glass, anyway)

rainwarrior wrote:

I've never even seen an HD CRT in real life.

NovaSquirrel's mom (my aunt) is married to someone who owns one.

Quote:

interlacing sucks unless your content is <= 30 fps

With certain developers prioritizing lighting complexity over frame rate, <= 30 fps has become common.

Quote:

Mostly I'm just annoyed that my PS3 defaults to "1080i" as a "superior" supported resolution on my 720p TV, when I'd never want to use 1080i at all. (Gotta disable 1080i every time my PS3 video settings reset.)

If you have a 1080 TV, a game locked to 30 fps, and a cable that can't do "high speed" HDMI, 1080i is superior. You need an HDMI cable rated for high speed to guarantee a stable 1080p 60 Hz picture. This was important back in the early PS3 days when not all HDMI cables in stores were high speed.

Standard HDMI: 720p, 1080i, or 1080p/30

High speed HDMI: 1080p/120 (enough for 3D) or 4K/30

tepples wrote:

rainwarrior wrote:

interlacing sucks unless your content is <= 30 fps

With certain developers prioritizing lighting complexity over frame rate, <= 30 fps has become common.

I specifically meant content that is a consistent framerate (e.g. film). Games are not that, in general. It's usually erratic framerate (often locked to no more than 30fps, though some games target 60fps for typical load). An unstable framerate plays

terribly with interlacing. Unless the frames are paired consistently it's absolutely awful. Some TVs at least have some form of detection for telecline/etc. that can help with this but plenty aren't up to the task of re-synching interlaced pairs for an unstable framerate.

tepples wrote:

Quote:

Mostly I'm just annoyed that my PS3 defaults to "1080i" as a "superior" supported resolution on my 720p TV, when I'd never want to use 1080i at all. (Gotta disable 1080i every time my PS3 video settings reset.)

If you have a 1080 TV, a game locked to 30 fps, and a cable that can't do "high speed" HDMI, 1080i is superior. You need an HDMI cable rated for high speed to guarantee a stable 1080p 60 Hz picture. This was important back in the early PS3 days when not all HDMI cables in stores were high speed.

1080i is not superior to 720p for an unstable framerate. I'd take upscaled 720p over 1080i for games any day of the week.

This is even assuming that the TV displays 1080i at interlaced 60hz, rather than de-interlacing it to 30hz, which plenty of LCD TVs will do.

tepples wrote:

NovaSquirrel's mom (my aunt) is married to someone who owns one.

I had no clue that anyone on this forum was that closely related to anyone else.

tepples wrote:

With certain developers prioritizing lighting complexity over frame rate, <= 30 fps has become common.

I personally think that 60fps should be the golden standard, in that making a game run at 60fps should be your first priority. I'd much rather play a game that runs at 720p @ 60fps than one at 1080p @ 30fps. I might be a little bias though about framerate over resolution though because I have fairly bad vision and I'm too stubborn to wear my glasses so 720p doesn't look any different than 1080p to me. I just think that you should at least try to match the framerate of an (NTSC) NES game.

I'm just curious, but do any GameCube/Xbox/PS2 games have it to where the background casts shadows, and not just the main character or something else? I mean like this:

tepples wrote:

Standard HDMI: 720p, 1080i, or 1080p/30High speed HDMI: 1080p/120 (enough for 3D) or 4K/30

Is there such thing as a

super high speed HDMI cable, like 4K/60 or 120?

Espozo, I actually made those shadow-volume images. Ha ha. I wrote that program 9 years ago:

http://rainwarrior.ca/dragon/effects.htmlBy the way, "shadow mapping" is a lot more common in games than shadow volumes, but I'm sure you read that in the wikipedia article you took the image from.

rainwarrior wrote:

By the way, "shadow mapping" is a lot more common in games than shadow volumes, but I'm sure you read that in the wikipedia article you took the image from.

I just did a google images search.

I did read it now though. I've noticed that in some games, shadows from a flat wall or something will sometimes actually look like a bilinear filtered staircase, which is probably because of a low resolution shadow map. Have shadow volumes started to rise in popularity now? Another thing I'm curious about is that is the shadow map actually just another polygon located over whatever the shadow is being casted on, or is the texture of the surface underneath it actually altered, if that makes sense? Either way, I'd imagine that something like ink in Splatoon is kind of similar to an opaque shadow map in how it's rendered? Sorry to get off on a tangent.

Shadow mapping requires hardware support for a depth texture. I think everything has had this since around about the PS2 era.

Shadow volumes has fewer requirements, but typically results in a lot more pixels rendered, and extra calculation, so it's not as performance effective usually. In a lot of earlier games only a few objects would be allowed to cast shadows, or other methods might be employed (e.g. just projecting the object on a flat plane and rendering it as black).

Once shadow mapping was available in hardware it became the dominant method and still is, so far as I've seen. The telltale sign of shadow mapping is that you can see the "pixels" in the shadow map. Just find something where the shadow is falling at an oblique angle or some other case where the resolution is poor, and you will see some boxy/fuzzy edges on it. Shadow Volumes are resolution-independent so they're always crisp.

I know Doom 3 used shadow volumes. I don't really have a list of other games that might use them.

Doom 3 also hit upon an independent reinvention of "depth fail", a shadow volume rendering technique that it turned out a couple Creative Labs engineers had patented (

US Patent 6,384,822). I seem to remember that the design-around put in place for the 4Q 2011 GPL release of id Tech 4, the engine of

Doom 3, cost a bit of performance. Perhaps shadow mapping grew in use as another design-around.

tepples wrote:

Doom 3 also hit upon an independent reinvention of "depth fail", a shadow volume rendering technique that it turned out a couple Creative Labs engineers had patented (

US Patent 6,384,822). I seem to remember that the design-around put in place for the 4Q 2011 GPL release of id Tech 4, the engine of

Doom 3, cost a bit of performance. Perhaps shadow mapping grew in use as another design-around.

Their patented method wasn't really as big a deal as it sounds. It was basically just a minor optimization for generating depth volumes. There are other ways to generate depth volumes, just that very specific optimization got patented. It's a patent on one implementation of shadow volumes, not a patent on all shadow volumes.

Also, this patent was long enough ago that the GPU pipelines have significantly advanced. We have hull/tesselation/domain/geometry/compute shader steps in the graphics pipeline now that can do a lot of the work on the GPU.

Shadow volumes are less popular than shadow maps for two main reasons. The first is that they tend to produce a high volume of pixel fill. The second is that it produces "hard" shadows. There are many good techniques for shadow mapping that allow softening/fading of the shadow edges, which is a commonly requested feature. I think techniques for softening shadow volumes exist, but they're less practical. I don't believe the patent is a significant deterrent (it's not in any way essential to shadow volumes).

Realistically though, how could they really even tell if someone copied his implementation, how could anyone even tell?

rainwarrior wrote:

I think everything has had this since around about the PS2 era.

How processing intensive is it for these systems though, even if it is in hardware, because they never seem to use it. I guess this "shadow map" is actually written over polygons in hardware, in that it isn't like there's a higher priority alpha blended texture over it? I still don't get how something like paint or blood is rendered on top of something.

Espozo wrote:

Realistically though, how could they really even tell if someone copied his implementation, how could anyone even tell?

There are diagnostic debugging tools for GPUs that would make it relatively easy to check. I think games that use shadow volumes are uncommon enough that it might not be very hard to check most of them, if you were interested in enforcing your patent.

Espozo wrote:

How processing intensive is it for these systems though, even if it is in hardware, because they never seem to use it. I guess this "shadow map" is actually written over polygons in hardware, in that it isn't like there's a higher priority alpha blended texture over it? I still don't get how something like paint or blood is rendered on top of something.

A shadow map is like if you projected a texture from the point of view of the light. Imagine putting a stencil over a light, and seeing the shape it makes. The stencil is the texture, the shape is the projected texture. Instead of projecting a colour or pattern on the scene, it projects a depth value. If the projected shadow value is deeper than the object, it gets light, if it's shallower, it's in shadow.

You make the shadow map basically by rendering the whole scene again (or just the shadow-casters) from the point of view of the light. If you're naive about it and make everything cast shadows, this would double your render time with just 1 light, triple it with 2 lights, etc. (An oversimplification, but roughly true.) You reduce the shadow map calculation time by reducing shadow casters, reducing the number of shadow casting lights, reducing the resolution of the shadow maps, etc.

People have been using versions of these techniques for shadows since the 1970s. They are scalable, and there are solutions that can fit pretty much any level of hardware/performance you need to meet.

tepples wrote:

rainwarrior wrote:

I've never even seen an HD CRT in real life.

NovaSquirrel's mom (my aunt) is married to someone who owns one.

Over here there's one that I can't tell whether it's a CRT or LCD (it has depth but not too much). In any case its ability to somehow make Mega 3's composite output look

crystal clear (pixels with hard edges) and without any forced deinterlacing makes it awesome. (I should note down the model, I know it's a Philips one).

rainwarrior wrote:

I know Doom 3 used shadow volumes. I don't really have a list of other games that might use them.

We should probably look at the Dreamcast, because ironically its hardware made shadow volumes trivial (i.e. the exact opposite of everything else).