Anes wrote:

According the wiki:

Quote:

76543210

||||||||

||||++++- Hue (phase, determines NTSC/PAL chroma)

||++----- Value (voltage, determines NTSC/PAL luma)

++------- Unimplemented, reads back as 0

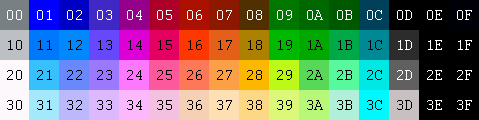

The bits of the color number are not strictly divided into hue and value like that. That's a very rough interpretation. If you do an image search for

NES palette, you can find images like this that will give you a rough overview of how the NES colors numbers work:

Attachment:

the_NES_palette_by_erik_red.png [ 4.38 KiB | Viewed 3223 times ]

the_NES_palette_by_erik_red.png [ 4.38 KiB | Viewed 3223 times ]

Notice the columns where the color number ends in _0, _D, _E, and _F are all grayscale. In HSV, these colors have an undefined hue and zero saturation. The HSV value is somewhere between 0 for black and 1 for white.

Only the columns for color numbers that end in _1 to _C have a defined hue and a non-zero saturation. There are 12 columns of chromatic colors, so you might want to divide the 360 degree hue range into 12 parts each 30 degrees apart. If we take a starting guess that column _6 is the pure red hue (0 degrees), then you might add 30 degrees each column toward green, then blue, then back to red.*

Code:

color HSV hue (degrees)

----- -------

_1 210

_2 240

_3 270

_4 300

_5 330

_6 0

_7 30

_8 60

_9 90

_A 120

_B 150

_C 180

To really understand how the color works, you have to read up about NTSC color signals. My basic understanding is this: The colors for a line of video are encoded into

one signalthat changes as the line progresses across the screen. If the signal changes slowly, it's interpreted as grayscale colors. If the signal fluctuates quickly, it's interpreted as chromatic colors.

Take a look at the nesdev wiki article

NTSC video, section Brightness Levels. For now, look at the "normalized" values for the rows "Color xx". For these normalized values, 0 represents black and 1 represents white.

For the grayscale colors, I think the HSV value is the normalized value from this table. Per the note under the table, columns _E and _F use the same level as color 1D (= 0 = black). And as I mentioned above, for grayscale colors, the hue is undefined and the saturation is zero.

The chromatic colors fluctuate the signal level between the _0 level on that row, and the _D level on that row. In HSV, I think the saturation is the height of the quickly fluctuating signal from low to high, and the value is the height of the middle of the fluctuating signal from the zero black level.

saturation = high - low

value = (high + low) / 2

Code:

_

| ^ ^ ^

saturation | | | | | | |

| | | | | | | -

| | | | | | | |

_ . v v . | value

|

0 ------------- -

* I think the real way to decode NTSC colors is much more complicated, but I think this might be a starting approximation. For example, you may have to tweak the starting hue value to something other than zero. Also, I think in the real way NTSC color maps to HSV, the hue values may not be equal steps of HSV hue 30 degrees apart. For example, on a

vectorscope, the 6 main chromatic RGB colors (red, yellow, green, cyan, blue, and magenta) are unevenly spaced around the NTSC color phase circle, but in HSV these 6 main colors are equally spaced around the HSV hue circle. The 12 NES hues are equally spaced around the NTSC color phase circle, so there's some more complicated conversion to get them to the "correct" HSV hue circle values.